-

chevron_right

chevron_right

Stable Diffusion Turbo XL can generate AI images as fast as you can type

news.movim.eu / ArsTechnica · Wednesday, 29 November - 21:20

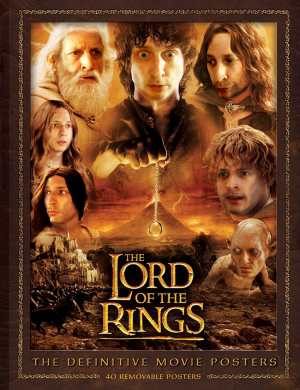

Enlarge / Example images generated using Stable Diffusion XL Turbo. (credit: Stable Diffusion XL Turbo / Benj Edwards)

On Tuesday, Stability AI launched Stable Diffusion XL Turbo , an AI image-synthesis model that can rapidly generate imagery based on a written prompt. So rapidly, in fact, that the company is billing it as "real-time" image generation, since it can also quickly transform images from a source, such as a webcam , quickly.

SDXL Turbo's primary innovation lies in its ability to produce image outputs in a single step, a significant reduction from the 20–50 steps required by its predecessor. Stability attributes this leap in efficiency to a technique it calls Adversarial Diffusion Distillation (ADD). ADD uses score distillation, where the model learns from existing image-synthesis models, and adversarial loss, which enhances the model's ability to differentiate between real and generated images, improving the realism of the output.

Stability detailed the model's inner workings in a research paper released Tuesday that focuses on the ADD technique. One of the claimed advantages of SDXL Turbo is its similarity to Generative Adversarial Networks (GANs), especially in producing single-step image outputs.